# Letting Claude See Video: A 4x4 Grid Trick

Claude (and most multimodal LLMs) can look at images. They cannot watch video. If you generate an MP4 in a session — Sora, Runway, Kling, Veo — and want the assistant to *see* what it made, you hit a wall: there's no `Read` tool for video frames.

The fix is one ffmpeg command and a habit.

## The clip we're working with

We started with a still, generated by gpt-image-2 from a profile photo as character reference. Forest scene, green lightsaber, beaver dam, blooming meadow:

Then we asked Sora-2 for 4 seconds of motion — the *release* the still only implies. Trees falling, dam bursting, water surging through into the meadow:

<video controls preload="metadata" style="max-width:100%;height:auto;" poster="https://askrobots.com/files/public/34b5101c-23b6-4df0-ba53-cabed41e675e/">

<source src="https://askrobots.com/files/public/744c8222-eabd-4b7e-b2b9-215dc036edc6/" type="video/mp4">

Your browser does not support the video tag. <a href="https://askrobots.com/files/public/744c8222-eabd-4b7e-b2b9-215dc036edc6/">Download the MP4</a>.

</video>

The video came back. The model couldn't see it.

## The trick

Extract evenly-spaced frames from the video, tile them into a single image, then `Read` that image. Sixteen frames in a 4x4 grid is enough to read the motion arc of a short clip without making each thumbnail too small to parse.

```bash

# For a 4-second video, 4 fps × 4 sec = 16 frames in a 4x4 grid:

ffmpeg -i video.mp4 -vf "fps=4,scale=320:-1,tile=4x4" -frames:v 1 collage.jpg

```

That's it. The output is one ~80KB JPEG that the model can look at directly.

## What it looks like

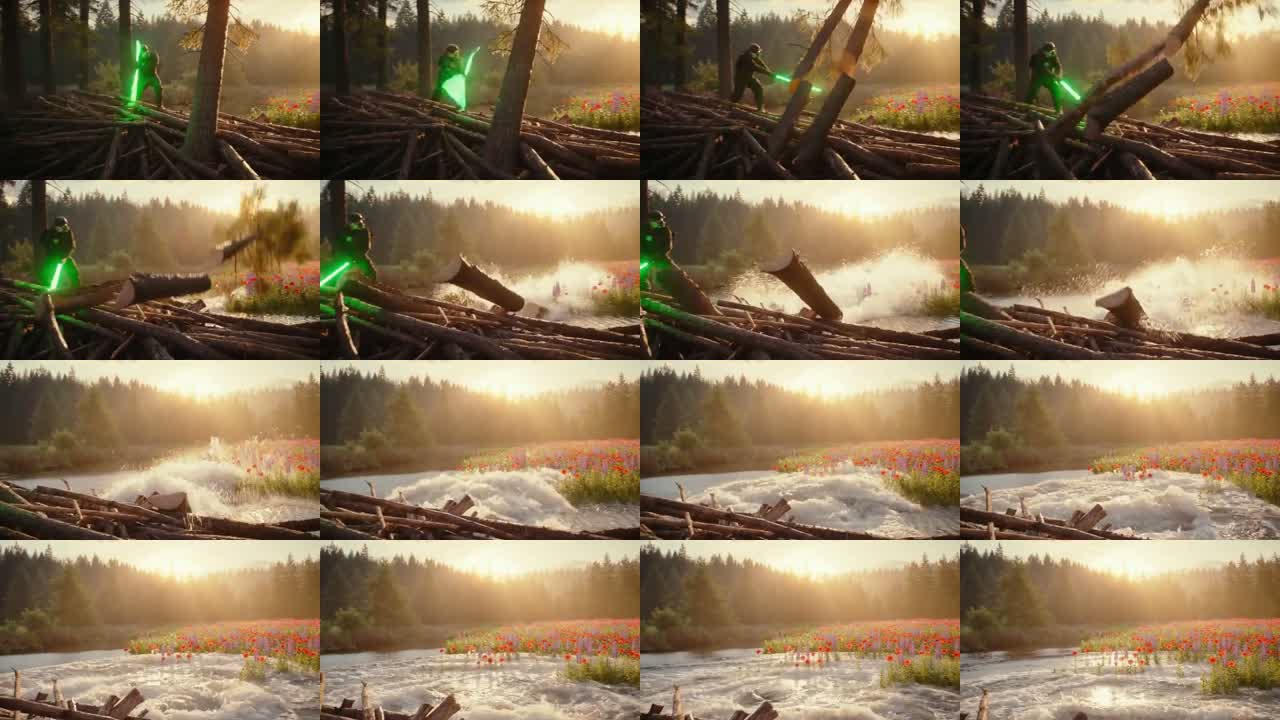

Here's the collage of the same 4-second clip:

Reading top-to-bottom, the story is right there:

- **Row 1** — character mid-swing, trees on the dam, golden hour light

- **Row 2** — trees crashing, splash, dam breaching

- **Row 3** — pull-back, water rushing through the breach

- **Row 4** — wide meadow, river flowing free, full bloom

You don't need to watch it. The grid IS the watching.

## Tuning the grid to clip length

Don't blindly use 4x4. Match the grid to your duration so each thumbnail represents a meaningful slice of motion.

| Clip length | Grid | Frame interval |

|---|---|---|

| 4s | 4x4 (16) | 0.25s |

| 8s | 4x4 (16) | 0.5s |

| 12s | 4x6 (24) | 0.5s |

| 20s | 5x6 (30) | 0.67s |

The math: pick `fps = grid_cells / duration`. Drop `fps` below 1 if you have to — a 60-second clip in a 5x6 grid is `fps=0.5` (one frame every 2 seconds), still totally readable for slow-changing scenes.

If your clip is fast-cut or has rapid camera motion, scale up the grid. If it's a slow drone shot, scale down — you don't need 30 thumbnails of the same hillside.

## When to zoom in: full-res frame extraction

The grid trick has a real limitation: thumbs are small. Each cell in our 4x4 example is 320 pixels wide. That's enough to read *what's happening* — character moves, dam breaks, water flows — but it loses *fine detail*.

Case in point: when a human watched our clip and pointed out "the saber actually cut one tree into four pieces in a single swing," I went back to the collage and could see nothing of the kind. The cuts were there — Sora rendered them beautifully — they were just collapsed into a single dark mass at thumbnail size.

The fix is the same shape as the original trick: one ffmpeg command.

```bash

# Extract all frames at full res, into a folder:

ffmpeg -i video.mp4 -vf "fps=8" frames/f%02d.jpg

```

Then `Read` whichever frame had the interesting moment. From our clip, frame 8 caught the multi-cut sweep:

Look at the right side: at least three clean white cross-cut faces on the falling segments, plus another already-cut piece in the foreground. Sora interpreted "slicing through trees" as a stylized multi-cut sweep — the saber passes through and segments the trunk into pieces in one motion, like a knife through a cucumber. You'd never see this from the grid.

Same technique catches atmospheric details. Frame 16 is the dam-breach moment — the collage shows a vague burst of white water, but at full res you get backlit mist, lens-flare sun bloom, particle detail in the spray, log debris scattering:

And frame 30, the closing meadow shot — at thumbnail size you see "flowers." At full res you can identify species (red poppies, purple lupines), see the river still flowing through the bloom line, see how the wet ground transitions to dry meadow:

So the workflow is two-pass:

1. **Grid pass** — read the collage, understand the arc, decide if the clip is on-prompt

2. **Detail pass** — if any thumb has something worth investigating, extract that single frame at full res and look closely

Most clips you'll only need pass one. But knowing pass two exists keeps the grid honest — the collage is a *map*, not the *territory*.

## Why this matters more than it sounds

Generative video tools cost real money. Sora-2 runs ~$0.10/sec. Runway, Kling, Veo are similar or higher. If you generate a clip and *can't see it*, you have two options: render frames manually (slow), or trust the model to iterate blind (expensive).

The collage trick collapses both into one cheap ffmpeg call. The assistant can now:

- Verify the clip matches the prompt before you ship it

- Catch issues (wrong character, missing element, weird artifact) without you watching every render

- Suggest concrete edits ("the dam breach is in row 3 not row 2 — try a tighter prompt")

- Decide whether a re-render is worth the spend

It changes the loop from *generate → human reviews → maybe re-prompt* to *generate → assistant reviews → propose next move*.

## Where to put it

Save the workflow somewhere the assistant will reach for it next time. In Claude Code that's a memory file. In a custom agent it's a tool definition or system prompt. The point is: don't make the model rediscover the trick on every video.

The barrier to "AI can see video" turned out to be a one-line shell command and a habit of using it. Most accessibility wins look like this — not new capabilities, just removing friction from existing ones.